As outlined in the previous post, Barbara Vetter (Potentiality from Dispositions to Modality Oxford University Press, 2015) developed the concept

of potentiality in her theory of dispositional powers. In that theory potentials are dispositions that are responsible for the manifestation of

possibilities. The possibilities then tend to become actual events or states of affairs. The concept of 'potential' in philosophy, in a sense close to that discussed here, goes back at least to Aristotle in Metaphysics Book \(\Theta\).

In contrast probabilities are weightings summing to one that describe in what proportion the possibilities tend to appear. I propose that the potential underpins the actual appearance of the possibilities while probability shapes it. This will be discussed further in this post.

Barbara Vetter proposed a formal definition of possibility in terms of potentiality:

POSSIBILITY: It is possible that \(p =_{def}\) Something has an iterated potentiality for it to be the case that \(p\).

So, it is further proposed that the probabilities are the weights that can be

measured through this iteration using the frequency of appearances of each possibility. Note that this indicates how probabilities can be measured but it is not a definition of probability.

In the field of disposition research there is an unfortunate proliferation of terms meaning roughly the same thing. The concept of 'power' brings out a disposition's causal role but so does 'potential'. As technical terms in the field both are dispositions. Now 'tendency' will also be introduced, and it is often used as yet another flavour of disposition.

Tendencies

Barbara Vetter mentions tendencies in passing in her 2015 book on potentiality and, although she discusses graded dispositions, tendencies are not a major topic in that work. In "What Tends to Be the Philosophy of Dispositional Modality" Rani Lill Anjum and Stephen Mumford (2018) provide an examination of the

relationship between dispositions and probabilities while developing a substantial theory of dispositional tendency. In their treatment powers are understood as disposing towards their manifestations,

rather than necessitating them. This is consistent with Vetter's potentials.

Tendencies are powers that do not necessitate manifestations but

nonetheless the power will iteratively cause the possibility to be the case.

In common usage a contingency is something that might possibly happen in the future. That is, it is a possibility. A more technical but still common view is that contingency is something that could be either true or false. This captures an aspect of possibility, but not completely because there is no

role for potentially; something responsible for the possibilities. There is also logical

possibility in which anything that does not imply a contradiction is logically

possible. This concept may be fine for logic but in this discussion, it is possibilities that can appear in the world that are under consideration. Here an actual possibility

needs a potentiality to tend to produce it.

Example (adapted from Anjum and Mumford)

Struck matches tend to light. Although disposed to light when struck, we all know that there is no guarantee

that they will light as there are many times a struck match fails to light. But

there is an iterated potentiality for it to be the case that the match lights.

The lighting of a struck match is more than a mere possibility or a logical

possibility. There are many mere possibilities towards which the struck match

has no disposition - that is no potential in the match towards struck matches melting, for instance.

Iterated potentiality provides the tendency for possible outcomes to show some patterns in their manifestation.

In very controlled cases the number of cases of success in iterations of match striking could provide a

measure of the strength of the power that is this potentiality. This would require a collection of matched that are essentially the same.

Initial discussion of probability

Anjum

and Mumford introduce their discussion of probability through a simple example that builds on a familiar understanding of dispositional tendencies associated with fragility.

"The fragility of a wine glass, for instance, might be understood to be a strong disposition towards breakage with as much as 0.8 probability, whereas the fragility of a car windscreen probabilities its breaking to the lesser degree 0.3. Furthermore, it is open to a holder of such a theory to state that the probability of breakage can increase or decrease in the circumstances and, indeed, that the manifestation of the tendency occurs when and only when its probability reaches one."

This example is merely an introduction and needs further development but already the claim that "the manifestation of the tendency occurs when and only when its probability reaches one" shows that it is not a model for objective probability. What is needed is a theory of dispositions that explains stable probability distributions. Of course, if the glass is broken then the probability of it being broken is \(1\). However, this has nothing to do with the dispositional tendency to break. What is needed is a systemic understanding of the relationship between the strength of a dispositional tendency and the values or, in the continuous case, shape of a probability distribution.

In the quoted example above each power is to be understood in terms of a probability of the

occurrence of a certain effect, which is given a specific value. The fragility

of a wine glass, for instance, might be understood to be a strong disposition

towards breakage with as much as 0.8 probability, whereas the fragility of a

car windscreen is less, and the probability of its breaking is a lesser degree

0.3. But given a wine glass or windscreen produced to certain norms and standards it would be expected that the disposition towards breakage would be quite stable. A glass with a different disposition would be a different glass.

Anjum and Mumford claim, that in some understandings the manifestation of a possibility occurs when and only when

its probability reaches one (see Popper, "A World of Propensities", 1990: 13, 20). This is a

misunderstanding of how probability works. Popper distinguished clearly between the

mathematical probability measure and what he called the physical propensity, which is more like a

force, but Popper does limit a propensity to have a strength of at most \(1\). As I will attempt to show below, Popper in proposing propensity interpretation of objective probabilities oversimplifies the relationship between dispositions and probabilities. This confusion led Humphreys to draft a paper (The Philosophical Review, Vol.

XCIV, No. 4 (October 1985)) to show that propensities cannot be probabilities.

As indeed they are not. They are dispositions. That would leave open the proposition that probabilities are dispositional tendencies, but that also will turn out to be untenable.

The proposal by Anjum and Mumford that powers can over dispose does seem to be sound. Over disposing is

where there is a stronger magnitude than what is minimally needed to bring

about a particular possibility. This indicates that

there is a difference between the notion of having a power to some degree and

the probability of the power’s manifestation occurring. Among other conclusions,

this also shows that the dispositional tendency does not reduce to probability,

preserving its status as a potential.

Anjum and Mumford continue the discussion using 'propensity' as having a tendency to some degree, where

degree is non-probabilistically defined. Anjum and Mumford use the notions of ‘power’, ‘disposition’ and ‘tendency’ more or

less interchangeably, whereas an object may

have a power to a degree there are powers that are simply properties. In what follows I try to will eliminate the use of 'propensity', except where commenting of the usage of others, and use 'tendency' to qualify either 'power', 'potential' or 'disposition' rather than let it stand on its own.

A probability always takes a value within a bounded inclusive range between zero

and one. If probability is \(1\) then probability theory stipulates that it is

almost certain (occurs except for a set of cases of measure zero). In contrast

to what Anjum and Mumford claim it is not natural to interpret this as necessity because

there are exceptions. For cases where there are only a finite set of

possibilities then probability \(1\) does mean that there are no exceptions. But as this is a special case in applied probability theory there is no justification in equating it with logical or metaphysical necessity.

A power must be strong enough to reach the threshold to make the possibilities

actual. Once the power is strong enough then the probability

distribution over the possibilities may be stable or affected by other aspects of the situation. So, instead of understanding powers and their degrees of strength as

probabilistic, powers and their tendencies towards certain manifestations are

the underpinning grounds of probabilities. Consider the example of tossing a coin.

A coin when tossed has the potential to fall either heads or tails. This

tendency to fall either way can be made symmetric and then the coin is 'fair'. From

which probability weightings of \(1/2\) for each outcome (taking account of the

tossing mechanism) can be assumed and then confirmed by measuring the proportion of

outcomes on iteration. The reason why the head and the tail events are equally probable statistically, when a fair coin is tossed, is that the coin is equally disposed towards those two outcomes due to its physical constitution. The probability weightings derive, in this example, from a symmetry in the potentiality, which in turn derives from the physical composition and detailed geometry of the coin.

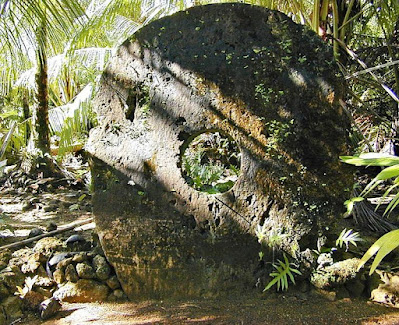

Consider a society that uses very large stone discs as currency. On examination of the disc, it would be possible to conject that if it were tossed then there would be two possible outcomes and that those outcomes are equally likely. But this disposition is not realised because of the effort required to construct the tossing

mechanism, as such a stone may weigh several metric tons. The enabling disposition that would give rise to the iteration of possibilities would have been this missing tossing mechanism. It is not a property of the disc. The manifestation of the dispositional tendency of the

disc to come to lie in one of two states needs an external mechanism that is disposed through design to toss the coin in a certain way. If the mechanism is constructed it may be too weak. It may tend to only flip the coin once giving a sequence such as

... T H T H T H H T H T H T H T H T H T H ...

that would give a frequency of T close to \(1/2\) but the sequence does not exhibit the potential for random outcomes to which the coin disposed.

Probabilities

and chance

From the above: potential and possibility are more fundamental than (or prior to) probability. Both are needed to construct and explain objective probability. The alternative, subjective probability, is based on beliefs about possibilities but that is not the

same thing as what is actually possible and how things will appear independently of anyone's beliefs or judgements.

In this blog I have already referred to a dispositional tendency begin to explain objective probabilities in quantum mechanics. The term propensity has been used to describe

these probabilities. I now think that was wrong. Propensity should be reserved for the dispositional tendency that is responsible for the probabilities to avoid this term merging the underpinning dispositional

elements and probability structure. Anjum and Mumford claim that they

have made a key contribution to clarifying the relationship between dispositional

tendencies and probability through their analysis of over disposition.

Anjum and Mumford claim "information is lost in the putative conversion of

propensities to probabilities" but only if the dispositional grounding of

probabilities is forgotten. Their discussion is strongly influences by

their interest in application to medical evidence where a major goal is reduction of uncertainty. Anjum and Mumford propose two rules on

how dispositions and probability relate.

- The more

something disposes towards an effect \(e\), the more probable is \(e\), ceteris

paribus; and the more something over disposes \(e\), the closer we approach

probability \(P(e) =1\).

- There is

a nonlinear ‘diminishing return’ in over disposing. E.g., if over disposing \(e\) by a magnitude \(x2\) produces a probability \(P(e) =0.98\), over disposing \(x3\) might increase that probability ‘only’ to \(P(e) =0.99\), and over disposing \(x4\) ‘only’ to \(P(e) =0.995\), and so on.

While these rules are fine as propositions, they miss the mark in

explaining the relationship between dispositions and probability. In the coin

tossing example strengthening the mechanism is not about

strengthening one outcome. Over disposing does provide support for the

distinction between the strength of the disposition and value of the probability but the relationship between underpinning potentials, dispositional mechanisms, and the

iterated outcomes needs to be made clear.

Anjum and Mumford also discuss coin tossing and make substantially the same points

as I made above. However, having clarified the distinction between propensity and probability, they revert to using the term propensity in a way that risks confusing the

concepts of dispositional tendency and probabilities with random

outcomes. They say "50% propensity" rather than "50%

probability". They then introduce the term "chance" that they

relate to outcomes in some specified situations. Propensity is then reserved by them for potential probability while chance

is the probability of an outcome in a situation. This is more confusing than helpful.

Anjum and Mumford go on to a discussion of radioactive decay that is known to be described by quantum theory. They make no mention of quantum theory (this will be corrected by them in Chapter 4) and strangely claim that radioactive decay is not probabilistic. The probability distributions derived from quantum mechanics unambiguously give the probability of decay per unit time. There are, per unit time, two possibilities "decay" or "no decay". Their error is to claim, "only one manifestation type" (decay) and from this that there is only one possibility. Ignoring quantum mechanics, they write:

"The reason it is tempting to think of radioactive decay as probabilistic is that there is certainly a distinct tendency to decay that varies in strength for different kinds of particles, where that strength is specified in terms of a half-life (the time at which there is a 50/50 chance of decay having occurred)."

But no, the reason to think that radioactive decay is probabilistic is that our best theory of nuclear phenomena explains it in terms of probabilities. This misunderstanding leads them to introduce the concept of indeterministic propensities. However, they have arrived at the concept it is left open as to whether there are non-probabilistic indeterminate powers, but radioactive decay is not an example.

The examples of the concept chance provided by Anjum and Mumford can be derived in their examples from a correct application of probability theory. Chance is often used as a term for 'objective probability', and I have done so in previous posts. I will continue to follow that usage and exploit the clarification obtained from the analysis above that shows that objective probability depends on the possibilities that are properties of an object. The manifestation of these possibilities may require an enabling mechanism. The statistical regularities displayed by these manifestations on iteration are due primarily to the physical properties of the object unless the enabling mechanism is badly designed.

Thet term 'propensity' has given rise to much confusion in the literature. Now that we are reaching an explanation of objective probability the term 'propensity' might better be avoided.

I propose that the model of objective probability is that:

OBJECTIVE PROBABILITY An object has probabilistic properties if it is physically constituted so that it has a potential to manifest possibilities that show statistical regularities.

It it possible to describe statistical regularities without invoking the term 'probability'.

Although some criticism of Anjum and Mumford is implied here, I recognise that

their contribution has done much to disentangle considerations about the

strength of dispositions that describe tendencies form a direct interpretation

as probabilities. However, the value of the three distinctions they have identified is mixed

- Chance

and probability are not fundamentally distinct and just require a correct

application of probability theory

- Probabilistic dispositional tendencies are distinct from non-probabilistic dispositional tendencies: this is a real and fruitful

distinction

- Deterministic

and indeterministic dispositional tendencies also provide a useful distinction but it remains to

be seen whether there are fundamental non-probabilistic indeterministic dispositions.

The next post will continue this theme with a discussion of dispositional

tendencies in causality and quantum mechanics, engaging once more with the 2018 book by Anjum and Mumford.